Manual ingestion and transform runs required hands-on execution, monitoring and ad-hoc fixes — slow and error-prone.

Azure Data Factory pipelines with scheduled triggers, Databricks notebooks for transformations, and automated monitoring/alerts.

Saved ~8.5 hrs/week of manual ops, ~€38,740 estimated annual savings, faster and more reliable nightly data delivery.

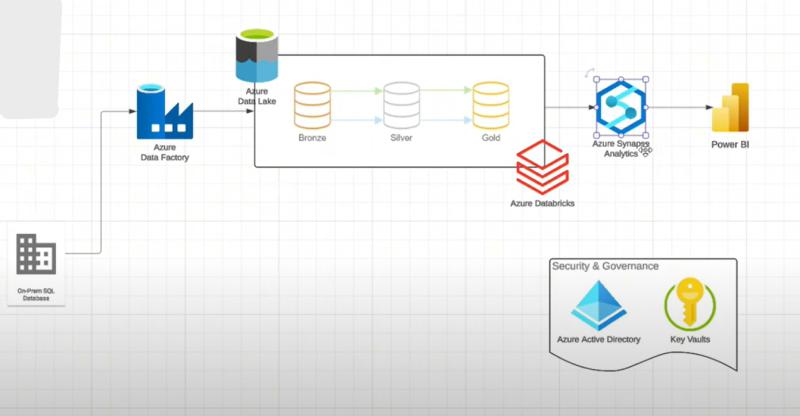

Technology Stack

Implementation Approach

Here's how we designed and built this solution:

- ADF pipelines with Lookup / ForEach / Copy activities to ingest files into a Bronze ADLS Gen2 layer.

- Databricks notebooks (silver layer) for cleaning and converting to Parquet formats.

- Synapse dedicated SQL pool for gold-layer views; nightly triggers and monitoring alerts for failures.

- Automated logging and basic retry logic to reduce manual retries.

Solution Showcase

💡 Want to see this in action? Book a demo to explore the full solution.

Could This Work for Your Business?

Manual processes eating 10+ hours/week

Microsoft 365 (Teams, SharePoint, Excel)

Similar results in 5-20 days

Then yes — let's talk.

Book Free 30-Min AuditSimilar Projects You Might Like

More automation case studies

Teams Bulk DM — €18.5k Annual Savings

Automates one-to-one Microsoft Teams outreach from an Excel list, saving ~3.25 hrs/week and ~€18.5k/year.

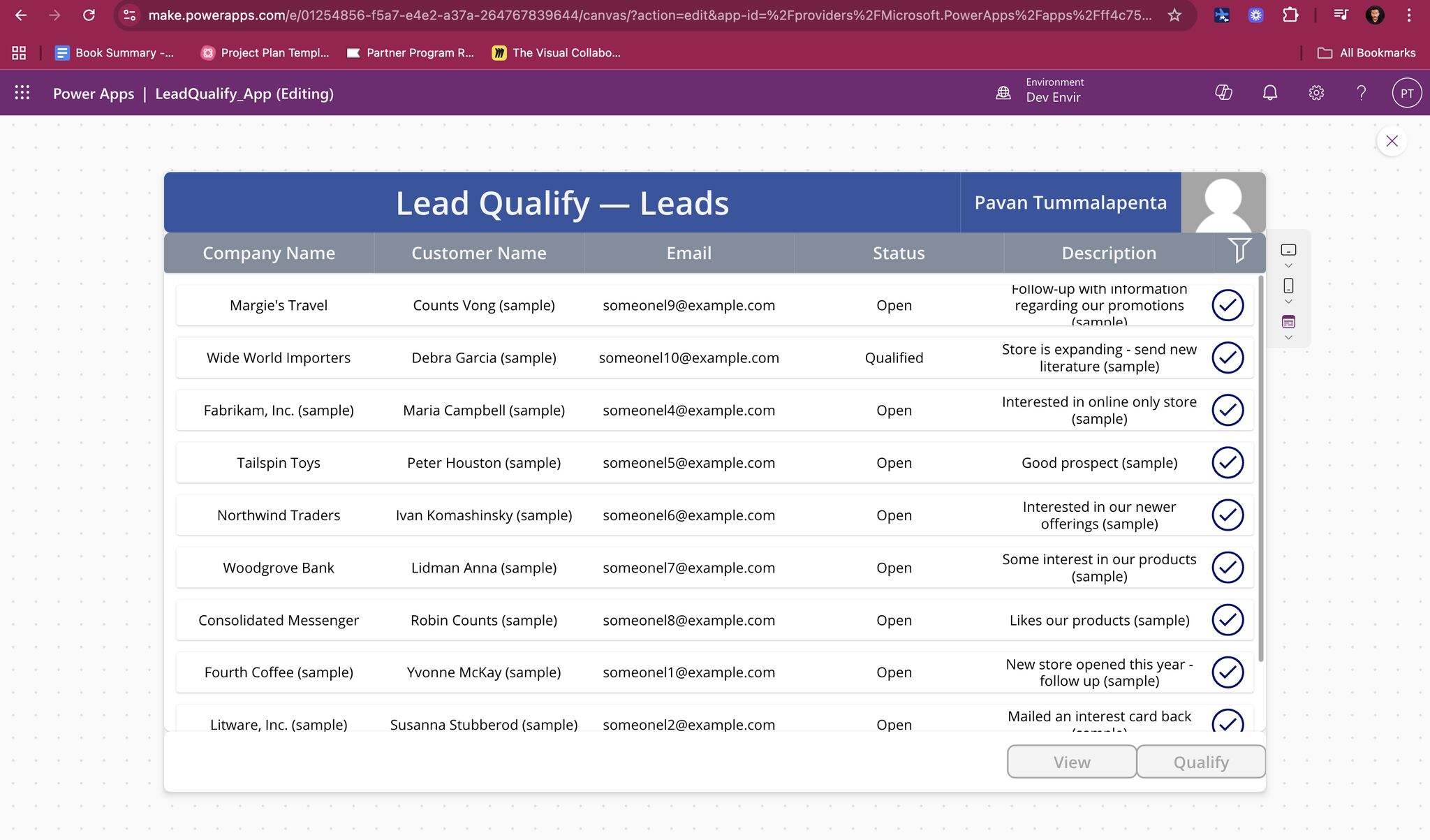

QualifyLeadAutomation — Faster Lead Qualification (≈4.2 hrs/week saved)

Canvas Power App + Power Automate flow that speeds up lead qualification in Dataverse, reducing manual work and errors.

Get Similar Results for Your Business

Book a free 30-minute discovery scan to uncover quick wins in your processes, data, and automation opportunities.